This is the tenth live-blog of my spring 2026 DERs class.

The last post introduced buildings from an energy perspective and discussed why a DER student might want to learn about them. This post discusses how we model the thermal dynamics of buildings using (surprise!) linear ordinary differential equations.

As discussed in the last post, almost all thermal equipment in buildings has some flexibility in the timing of its electricity use. That flexibility can be used to shift energy use away from times when electricity prices are high, when electricity generation is dirty, or when electrical infrastructure is strained.

To understand how much flexibility thermal equipment has, and how to use it, it helps to understand how indoor temperatures evolve and the role thermal equipment plays in that evolution.

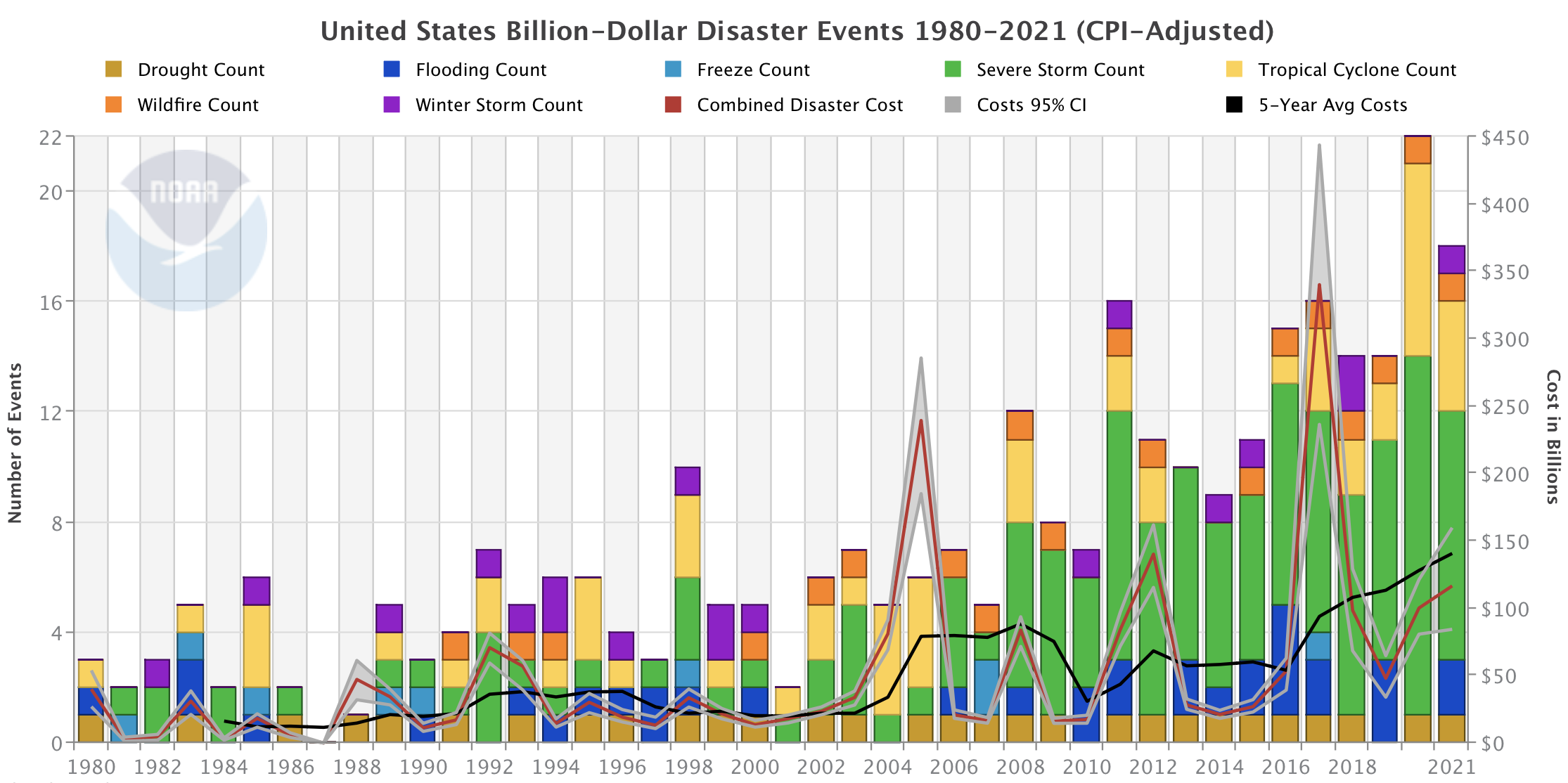

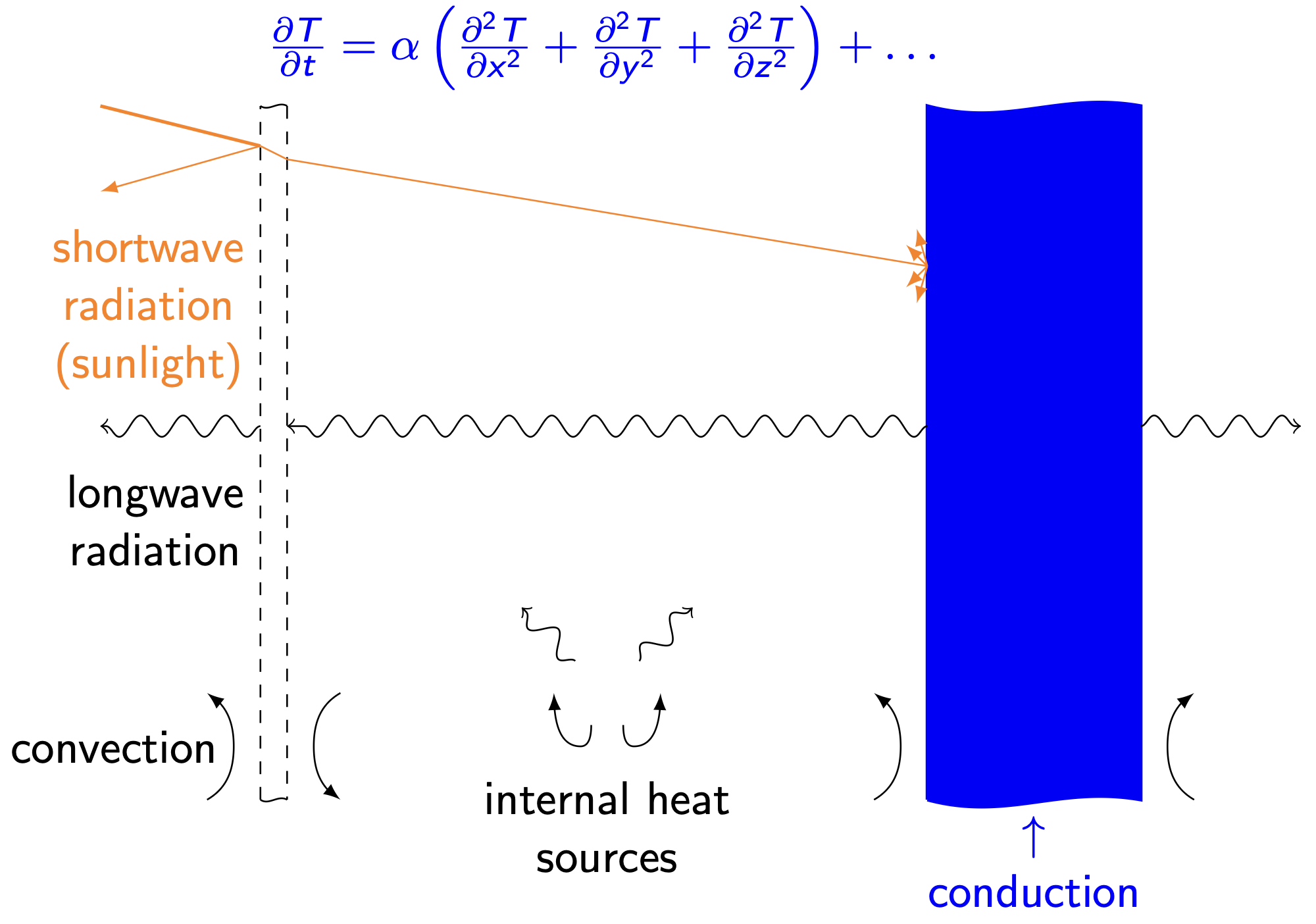

Detailed modeling of indoor temperature dynamics is hard. A “true” model (sketched below for a very simple building geometry) would keep track of continuous temperature distributions within the indoor air, walls, windows, roof, and foundation. It would account for conduction and air infiltration through the building fabric, for shortwave radiation from lights and the sun, for longwave radiation exchange between surfaces, for convective heat transfer between solids and fluids, and for all heat transfer driven by occupants and their devices. The model structure would be a set of coupled, nonlinear partial differential equations whose solution we could only hope to approximate using computationally intensive numerical solvers.

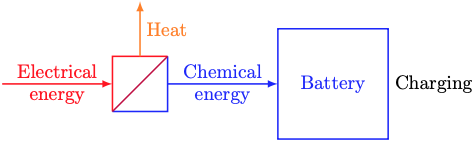

Thankfully, there’s a much simpler modeling approach that captures the dominant physics that drive indoor temperature dynamics. That approach is called thermal circuit modeling. It describes temperature dynamics by analogy to (direct current) electrical circuits. In that analogy, temperature plays the role of voltage and heat1 plays the role of charge. Resistors are things that impede the flow of heat. Capacitors are things that store heat.

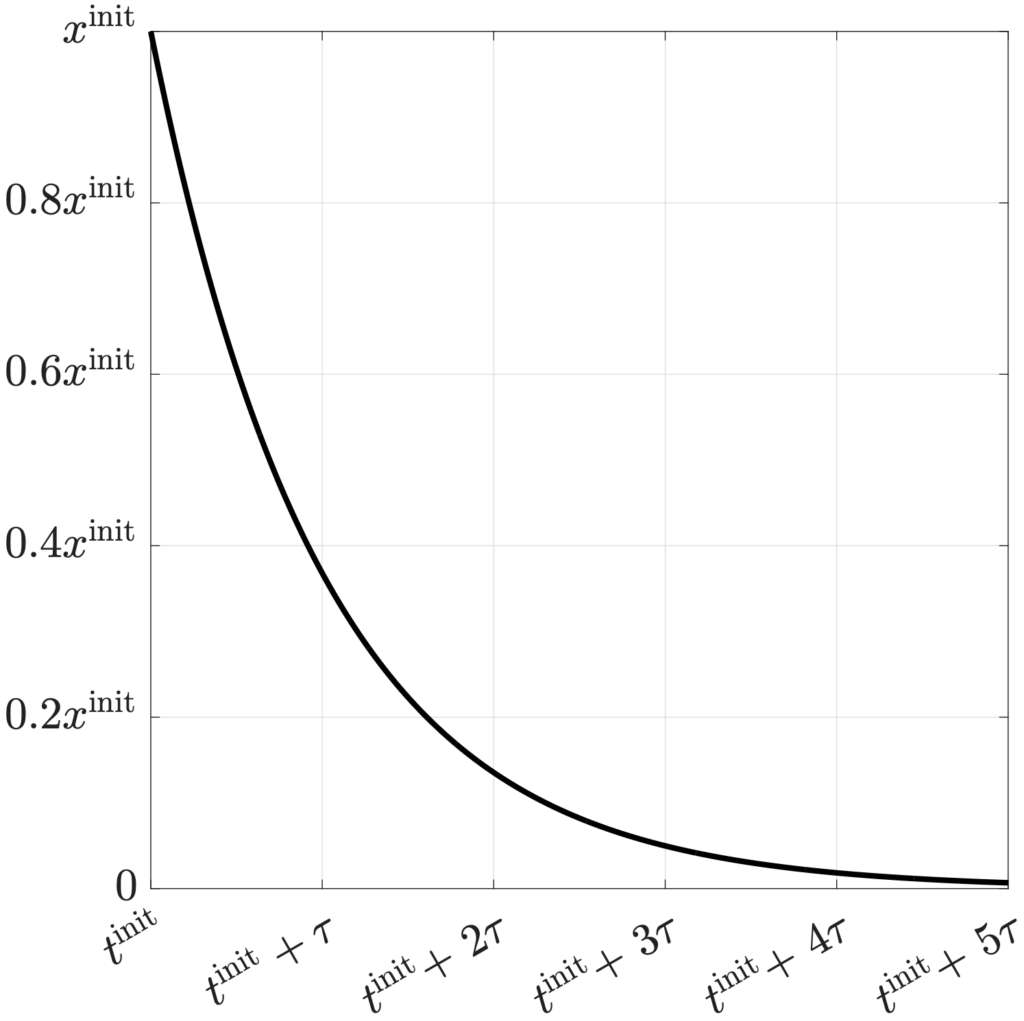

The figure below shows a thermal circuit with one resistor and one capacitor.

The state of this thermal circuit is the indoor temperature at time t, T(t). The outdoor temperature θ(t) plays the role of a driving voltage. The heat flows qc(t) and qe(t) act as current sources. They come from controlled thermal equipment and exogenous sources (sunlight, body heat, etc.), respectively.

The 1R1C circuit’s governing equation is a first-order linear ODE. It can be derived by applying Kirchhoff’s Current Law at the node labeled T(t) and Ohm’s Law for the temperature (voltage) drop across the resistor. With the ODE in hand, we can apply the usual time-discretization scheme to produce an algebraic equation that can be programmed in a computer to simulate the indoor temperature’s dynamic response to changing outdoor temperatures and other boundary conditions.

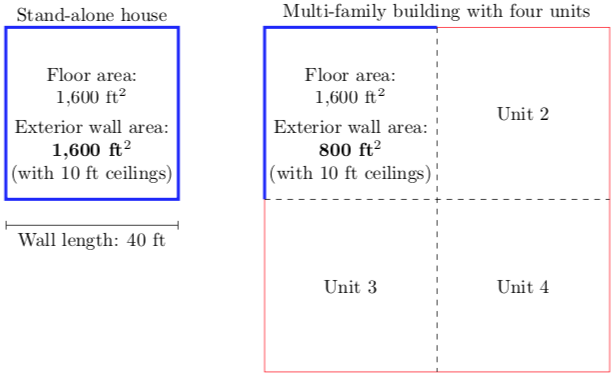

The 1R1C model parameters and boundary conditions can be specified in a few ways. They can be found from first principles given information about the building geometry and material properties. They can be fit to monthly utility bills and weather statistics. They can be fit to time-series data from a smart thermostat. Each of these approaches has its challenges and merits. That the latter two approaches work at all is a happy consequence of the 1R1C model’s very simple structure. Fitting more complex, nonlinear building thermal models to data from utility bills or smart thermostats is much harder.

Higher-order thermal circuit models can represent a wider range of behavior than 1R1C models. They can represent large buildings with thermal coupling between rooms or floors. They can represent the deep thermal mass of concrete, wood, and other dense building materials. Higher-order models can be formed and discretized like 1R1C models.

- For the nitpickers, yes, technically heat isn’t really a thing. Scientists used to think heat was an intangible, invisible fluid that flowed from hot things into nearby cold things. It’s not. Heat is just a way to transfer energy, like work (exerting a force over a distance). That’s the definition of heat: Energy transfer that’s not work. Alternatively and equivalently, heat is energy transfer driven only by temperature differences.